Autonomous vehicles are among the most significant technological developments currently. These vehicles have the potential to transform how people travel, how goods are transported, and even how cities are designed. The combination of artificial intelligence (AI), sensors, high-speed connectivity, and advanced software enables self-driving cars to go beyond being just another innovation; they represent a significant shift that could affect safety, the environment, business, and even everyday human behaviour.

An autonomous vehicle is defined as a car that is able to observe its surroundings, process information, and make driving decisions with little or no human involvement, as per Nathan (2025). Unlike traditional vehicles, which rely entirely on a driver’s judgment, autonomous vehicles use advanced technology to understand the road environment. This allows them to steer, accelerate, brake, and respond to changing conditions in real time, aiming to match and eventually reduce human errors, thus resulting in safety and efficiency.

This article will guide you through autonomous vehicles, the various levels of these vehicles, the technology behind the operation of the autonomous cars, and the technical challenges. The main goal is to understand fully how autonomous vehicles work.

SAE Levels of Driving Automation

SAE stands for Society of Automotive Engineers, which is an international organisation that creates technical standards for cars and other vehicles. One of their most influential contributions to driving automation is the creation of the SAE J3016‑2021 standard. This standard provides a guideline that clearly defines the six “SAE Levels” of driving automation from Level 0 to Level 5. A study done by Brad (2021) highlights the following SAE levels.

- Level 0 – No Automation

At this stage, the driver is fully responsible for every aspect of driving. This includes key driving functions such as steering, accelerating, braking, parking, and other manoeuvres required to operate or stop the vehicle safely.

According to Radovan (2024), the level 0 cars are considered as no automation cars. However, some of these vehicles have driver assistant features. They are still considered no automation because these features don’t automate, but instead help the driver by providing temporary interventions and alerts. These features include automatic emergency braking, forward collision warnings, and lane departure alerts.

- Level 1 – Driver Assistance

This type of vehicle assists the driver with one function at a time, such as steering, accelerating and braking. The driver must remain alert and in complete control of all other aspects of driving.

A typical example is adaptive cruise control, where the car automatically adjusts its speed based on the traffic ahead and lane keeping support. Using cameras to detect lane markings, the system can provide gentle steering input or alerts if the car begins to drift without signalling. However, the driver must remain in complete control, as the system only assists rather than drives the vehicle.

- Level 2 – Partial Automation

At Level 2, the vehicle can manage two tasks together, such as steering and controlling speed. However, the driver must remain attentive at all times and be ready to take over immediately. These level 2 vehicles are typically equipped with advanced driver assistance systems (ADAS) that can take control in specific scenarios over the functions mentioned above.

A good example is given by Rambus (2022), the Highway Driving Assist system. This technology is being installed in the vehicles manufactured by Genesis, Hyundai, and Kia. The assistant can actively steer, accelerate, and brake on highways. However, drivers must keep their hands on the wheel and remain attentive, since the system is designed to assist, not replace, human control.

- Level 3 – Conditional Automation

The vehicles in level three handle all the driving tasks under certain conditions, such as in heavy traffic or on specific highways. Drivers must always be available to take the wheel if the advanced driver assistance systems require assistance or suddenly stop functioning effectively.

A lot of companies have manufactured this type of vehicle. For instance, according to Alex (2021) The Honda Company has come up level 3 conditional automation cars known as “Honda Sensing Elite with Traffic Jam Pilot.” He states that the technology in this car enables hands-off driving in specific, well-defined conditions. For instance on the highways, it allows drivers to go hands-free when lane-keeping is active, and it can perform lane changes with driver approval. With the Active Lane Change function enabled, the car can even change lanes and overtake vehicles on its own. To alert others, a sticker on the back of the car indicates it is capable of “Automated Drive.”

Traffic Jam Pilot adds another layer of autonomy by allowing the vehicle to operate without the driver’s constant monitoring. During this mode, Honda states the driver can watch videos or engage with infotainment features. If the driver ignores a request to take back control, the system will safely pull the car to the roadside, using hazard lights and the horn if necessary.

- Level 4 – High Automation

At Level 4 automation, the vehicle’s system takes full responsibility for driving and navigation. Passengers are not required to monitor the road or be ready to take control; the car can operate entirely on its own.

However, Level 4 highly automated driving systems are typically limited to specific geographic locations. They cannot travel outside of designated service areas or during dangerous weather conditions.

A real-world example is Waymo’s driverless taxis. This vehicle can drive itself, but it is limited as it cannot drive outside the restricted area. This means that no one is controlling the vehicles either physically or remotely, as the car can take full responsibility for taking driving tasks as per Pymnts (2025)

- Level 5 – Full Automation

At Level 5 automation, vehicles handle every aspect of driving and navigation without any human input. Passengers only need to set a destination and are free to work, rest, or enjoy entertainment during the trip. Unlike lower levels of automation, Level 5 systems are designed to function independently in all weather conditions and across any type of roadway. No Level 5 vehicles currently exist. Companies worldwide are still researching and testing toward this vision.

Core Sensing Technologies

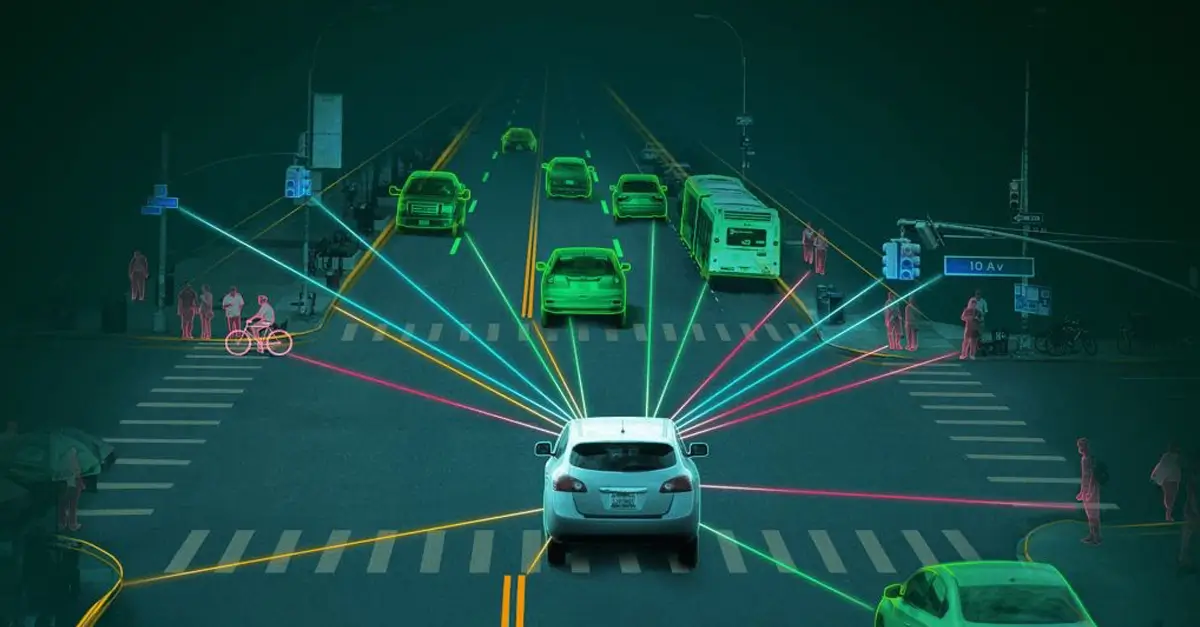

At the core of every autonomous vehicle lies a collection of advanced sensors. These devices allow the car to “see” and interpret its surroundings, helping it recognise obstacles, anticipate hazards, and make safe driving decisions. Research done by Nischay (2024) highlights the key sensing technologies which play a unique role, and together they provide a more complete picture of the road as explained below.

- Light Detection and Ranging (LiDAR)

This technology works by sending out pulses of laser light and measuring how long they take to bounce back. This creates highly accurate 3D maps of the environment, often down to the centimetre. LiDAR is especially useful for spotting objects, avoiding obstacles, and helping a vehicle understand exactly where it is, even in dark or unfamiliar places.

However, it is costly and less reliable in bad weather conditions such as heavy rain, snow, or fog. Some self-driving prototypes, like those used by Waymo, depend heavily on LiDAR for precision mapping.

- Radar

Radar uses radio waves to detect objects and measure their speed and distance. One of radar’s most significant advantages is that it works well in poor weather, such as fog, dust, or heavy rain, where cameras and LiDAR may struggle. It can also detect objects hundreds of meters away, giving the vehicle plenty of time to react.

Its limitation is that the images it produces are not very detailed compared to LiDAR or cameras, which makes it harder to distinguish between different types of objects. Still, radar is widely used in features like adaptive cruise control, where a car automatically maintains distance from the vehicle ahead.

- Cameras

Cameras function much like human eyes; they are used to capture images of the road in full colour. They can read traffic lights, recognise road signs, detect lane markings, and even notice hand signals from pedestrians or cyclists. This makes cameras essential for understanding the driving environment and following road rules.

They are also relatively inexpensive compared to LiDAR. The challenge is that cameras depend on good lighting and cannot measure depth as accurately as LiDAR. Systems like Tesla’s Autopilot rely heavily on cameras, using AI-powered software to interpret what the camera sees.

- Sensor Fusion

As the sensor is perfect, autonomous vehicles then use sensor fusion to overcome the weaknesses of the sensors. Sensor fusion combines data from LiDAR, radar, and cameras into one unified picture of the environment. For instance, radar can “see” through fog when cameras cannot, while LiDAR provides precise distance measurements that cameras alone cannot achieve.

This integration, often powered by deep learning, ensures that the vehicle has a complete and reliable understanding of the world around it. In practice, sensor fusion makes self-driving cars far safer and more dependable than if they relied on just one type of sensor.

Read Also: Understanding AI Agents: The Digital Minds Behind Modern Technology

AI and Machine Learning for Navigation

Artificial intelligence (AI) acts as the “brain” of autonomous vehicles. It takes information from sensors, makes sense of the environment, predicts what might happen next, and decides how the car should move. Without AI, self-driving vehicles would not be able to operate safely and intelligently. The main tasks of AI in navigation are explained below.

- Perception

Perception helps the autonomous car “see” the world around it. AI uses deep learning methods, especially convolutional neural networks (CNNs), to process data from cameras, radar, and LiDAR. These systems detect and recognise objects such as other cars, pedestrians, cyclists, animals, traffic lights, and road signs. An AI system correctly interprets the data to find the perception around it, thus acting accordingly.

- Prediction

Once the car understands its surroundings, it must predict how other road users might move. This includes estimating whether a pedestrian will step into the street or where another vehicle might turn. AI models like recurrent neural networks (RNNs) and long short-term memory (LSTM) networks are often used to make these predictions based on patterns over time.

- Path Planning

Path planning is about deciding where the vehicle should go next. The system charts a safe and efficient route that follows traffic rules, avoids collisions, and adjusts to road conditions.

- Decision-Making and Control

- This is the stage where the car turns plans into tangible actions. The AI integrates all the information from perception, prediction, and path planning to decide when to accelerate, brake, steer, or take emergency action. Reinforcement learning and imitation learning are often used here; the car either learns by trial and error in simulations or by copying expert human drivers.

Vehicle-to-Everything (V2X) Connectivity

Connectivity is very crucial in autonomous vehicles (AVs). Vehicle-to-Everything (V2X) refers to the communication system that allows vehicles to connect with their surroundings. They can communicate with other vehicles, roadside infrastructure, pedestrians and connect to different networks. A study done by Hieng (2024) illustrates this communication as shown below:

- Vehicle-to-Vehicle (V2V) – this communication systemenables cars to share information directly with each other in real time. A study done by NHTSA specifies that “with the help of Vehicle to vehicle communication system, if one car brakes suddenly on a highway, it can instantly alert nearby vehicles to slow down, thus preventing accidents.”

- Vehicle-to-Infrastructure (V2I) – here, vehicles exchange data with roadside infrastructure such as traffic lights, road signs, and toll systems. A typical example is a smart traffic light that tells a car how long before it turns red or green, allowing the vehicle to adjust its speed efficiently, as per Andrew (2019)

- Vehicle-to-Pedestrian (V2P) – this communication helps vehicles communicate with pedestrians or cyclists, often via mobile devices or wearable tech. For instance, a pedestrian crossing the street while looking at their phone could receive an alert from an approaching autonomous vehicle. At the same time, the car would be notified of the pedestrian’s presence, reducing the risk of collisions.

- Vehicle-to-Network (V2N) – this technology expands communication to include cloud platforms and wider networks. This allows vehicles to connect with navigation systems, real-time traffic updates, weather forecasts, and even entertainment services.

Core Applications of V2X

The real strength of Vehicle-to-Everything (V2X) lies in its practical applications. By enabling real-time data exchange between vehicles, infrastructure, pedestrians, and networks, V2X makes driving not only brighter but also safer and more efficient. Below are the applications.

- Collision Avoidance allows vehicles to warn each other about hazards that might not be immediately visible to sensors.

- Dynamic Route Optimisation-By tapping into real-time traffic and infrastructure data, V2X enables vehicles to choose the fastest or most efficient routes.

- Adaptive Traffic Light Coordination-With V2X, vehicles can communicate directly with traffic lights. This allows smart signals to adjust timing based on traffic flow and the speed of cars.

- Pedestrian Detection and Safe Crossings as V2X communicate with pedestrians or cyclists, often via mobile devices or wearable tech, even if they are hidden from view by another car, hence reducing the risk of collisions at crosswalks.

- Emergency Vehicle Prioritisation-when an ambulance or fire truck is approaching, V2X can signal nearby vehicles and traffic systems to clear the way. This ensures that emergency vehicles reach their destinations quickly and safely while minimising disruptions to overall traffic.

Technical Challenges of Autonomous Vehicles

Although self-driving cars are advancing rapidly, the journey from partial automation to full autonomy is still filled with obstacles. Research done by Srinivas (2025) underscores the following challenges.

- AI Limitations and Edge Cases

Artificial intelligence powers the “brain” of an autonomous vehicle. However, it still struggles with unusual and rare situations, often called edge cases. These could be complex city environments, confusing temporary road markings, or a pedestrian suddenly stepping into the street. Unlike human drivers, AI does not have natural “common sense” to fill in the gaps.

- Sensor Reliability and Environmental Conditions

The cameras, LiDAR, and radar that guide autonomous vehicles each have their own weaknesses. Cameras depend on good lighting, LiDAR can be disrupted by heavy rain or snow, and radar struggles with fine details. Together, these systems reduce blind spots, but they are not wholly dependable. For instance, in thick fog, even combined sensors may fail to “see” clearly. Backup systems can help, but they also add cost and complexity.

- Mapping and Localisation

Self-driving cars rely heavily on high-definition maps to navigate safely. These maps provide precise details about lanes, curbs, and traffic signals. The problem is keeping them updated everywhere in the world, which is time-consuming and expensive.

- Cybersecurity and Privacy

Autonomous vehicles are essentially computers on wheels, which makes them targets for cyber-attacks. Hackers could try to take control, block communications, or feed false data to the car’s sensors. There have already been tests showing that such attacks are possible. Privacy is another concern, since AVs collect enormous amounts of personal data, such as location history and, in some cases, even facial recognition. To reduce risks, manufacturers are now required to use strong protections, like encrypted communications and secure software updates.

Read Also: The Future of Autonomous Vehicles: How Self-Driving Cars Will Revolutionize Transportation

Conclusion

Autonomous vehicles are evolving every day, thus moving from a dream. The cars with their ability to assist drivers or handle driving tasks like steering, accelerating and braking on their own, are gradually reshaping transport, law, and safety. These cars have been evolving from no automation cars to high automation cars, which are currently in use. The full automation cars are yet to be introduced in the market, with the help of various technologies like machine learning, artificial intelligence, sensor technologies and vehicle-to-everything connection systems. The autonomous cars are being able to collect information from the surrounding so that the vehicle can be able to know and interpret its surrounding, use the collected data to predict what might happen next thus to make the driving decisions and also help the vehicles to communicate with its surrounding like other vehicles, pedestrians and street lights so as to ensure that its safe and avoid accidents. Although their broad application, the autonomous cars face quite a number of challenges like, the security of data which they use, the struggle with unusual and rare situation while they are being used often known as edge cases, environmental conditions which limits the abilities of sensor technology and over relying of high definition maps which needs regular updates, lets embrace this technology so that we can keep our lives more efficient.